Expert

- Metrics: Chidamber and Kemmerer Object-Oriented metrics

- Metrics: OOD Metrics (Distance, Abstractness)

- Software Metrics by levels: Project, File, Function, Class, Method

- Cyclomatic complexity metric

- Maintainability Index

- Heavyweight software audits and inspections, Fagan inspections

Metrics: Chidamber and Kemmerer Object-Oriented metrics

The Chidamber & Kemerer metrics suite originally consists of 6 metrics calculated for each class: WMC, DIT, NOC, CBO, RFC and LCOM1. The original suite has later been amended by RFC´, LCOM2, LCOM3 and LCOM4 by other authors.

- WMC: Weighted Methods Per Class

- DIT: Depth of Inheritance Tree

- NOC: Number of Children

- CBO: Coupling between Object Classes

- RFC and RFC´: Response for a Class

- LCOM1: Lack of Cohesion of Methods

WMC Weighted Methods Per Class

WMC is simply the method count for a class.

WMC = number of methods defined in class

Keep WMC down. A high WMC has been found to lead to more faults. Classes with many methods are likely to be more more application specific, limiting the possibility of reuse. WMC is a predictor of how much time and effort is required to develop and maintain the class. A large number of methods also means a greater potential impact on derived classes, since the derived classes inherit (some of) the methods of the base class. Search for high WMC values to spot classes that could be restructured into several smaller classes.

DIT Depth of Inheritance Tree

DIT = maximum inheritance path from the class to the root class

The deeper a class is in the hierarchy, the more methods and variables it is likely to inherit, making it more complex. Deep trees as such indicate greater design complexity. Inheritance is a tool to manage complexity, really, not to not increase it. As a positive factor, deep trees promote reuse because of method inheritance.

A high DIT has been found to increase faults. However, it’s not necessarily the classes deepest in the class hierarchy that have the most faults. Glasberg et al. have found out that the most fault-prone classes are the ones in the middle of the tree. According to them, root and deepest classes are consulted often, and due to familiarity, they have low fault-proneness compared to classes in the middle.

A recommended DIT is 5 or less. The Visual Studio .NET documentation recommends that DIT <= 5 because excessively deep class hierachies are complex to develop. Some sources allow up to 8.

NOC Number of Children

NOC = number of immediate sub-classes of a class

NOC equals the number of immediate child classes derived from a base class. In Visual Basic .NET one uses the Inherits statement to derive sub-classes. In classic Visual Basic inheritance is not available and thus NOC is always zero.

NOC measures the breadth of a class hierarchy, where maximum DIT measures the depth. Depth is generally better than breadth, since it promotes reuse of methods through inheritance. NOC and DIT are closely related. Inheritance levels can be added to increase the depth and reduce the breadth.

A high NOC, a large number of child classes, can indicate several things:

- High reuse of base class. Inheritance is a form of reuse.

- Base class may require more testing.

- Improper abstraction of the parent class.

- Misuse of sub-classing. In such a case, it may be necessary to group related classes and introduce another level of inheritance.

- High NOC has been found to indicate fewer faults. This may be due to high reuse, which is desirable.

CBO Coupling between Object Classes

CBO = number of classes to which a class is coupled

Two classes are coupled when methods declared in one class use methods or instance variables defined by the other class. The uses relationship can go either way: both uses and used-by relationships are taken into account, but only once.

Multiple accesses to the same class are counted as one access. Only method calls and variable references are counted. Other types of reference, such as use of constants, calls to API declares, handling of events, use of user-defined types, and object instantiations are ignored. If a method call is polymorphic (either because of Overrides or Overloads), all the classes to which the call can go are included in the coupled count.

High CBO is undesirable. Excessive coupling between object classes is detrimental to modular design and prevents reuse. The more independent a class is, the easier it is to reuse it in another application. In order to improve modularity and promote encapsulation, inter-object class couples should be kept to a minimum. The larger the number of couples, the higher the sensitivity to changes in other parts of the design, and therefore maintenance is more difficult. A high coupling has been found to indicate fault-proneness. Rigorous testing is thus needed. — How high is too high? CBO>14 is too high, say Sahraoui, Godin & Miceli in their article (link below).

A useful insight into the 'object-orientedness' of the design can be gained from the system wide distribution of the class fan-out values. For example a system in which a single class has very high fan-out and all other classes have low or zero fan-outs, we really have a structured, not an object oriented, system.

RFC and RFC´ Response for a Class

The response set of a class is a set of methods that can potentially be executed in response to a message received by an object of that class. RFC is simply the number of methods in the set.

RFC = M + R (First-step measure)

RFC’ = M + R’ (Full measure)

M = number of methods in the class

R = number of remote methods directly called by methods of the class

R’ = number of remote methods called, recursively through the entire call tree

A given method is counted only once in R (and R’) even if it is executed by several methods M.

Since RFC specifically includes methods called from outside the class, it is also a measure of the potential communication between the class and other classes.

A large RFC has been found to indicate more faults. Classes with a high RFC are more complex and harder to understand. Testing and debugging is complicated. A worst case value for possible responses will assist in appropriate allocation of testing time.

RFC is the original definition of the measure. It counts only the first level of calls outside of the class. RFC’ measures the full response set, including methods called by the callers, recursively, until no new remote methods can be found. If the called method is polymorphic, all the possible remote methods executed are included in R and R’.

The use of RFC’ should be preferred over RFC. RFC was originally defined as a first-level metric because it was not practical to consider the full call tree in manual calculation. With an automated code analysis tool, getting RFC’ values is not longer problematic. As RFC’ considers the entire call tree and not just one first level of it, it provides a more thorough measurement of the code executed.

LCOM1 Lack of Cohesion of Methods

The 6th metric in the Chidamber & Kemerer metrics suite is LCOM (or LOCOM), the lack of cohesion of methods. This metric has received a great deal of critique and several alternatives have been developed. In Project Metrics we call the original Chidamber & Kemerer metric LCOM1 to distinguish it from the alternatives.

Metrics: OOD Metrics (Distance, Abstractness)

IN 1994 ROBERT “UNCLE BOB” MARTIN PROPOSED A GROUP OF OBJECT-ORIENTED METRICS THAT ARE POPULAR UNTIL NOW. THOSE METRICS, UNLIKE OTHER OBJECT-ORIENTED ONES DON’T REPRESENT THE FULL SET OF ATTRIBUTES TO ASSESS INDIVIDUAL OBJECT-ORIENTED DESIGN, THEY ONLY FOCUS ON THE RELATIONSHIP BETWEEN PACKAGES IN THE PROJECT.

The level of detail of Martin’s metrics is still lower than the one of CK’s metrics.

Martin’s metrics include:

- Efferent Coupling (Ce)

- Afferent Coupling (Ca)

- Instability (I)

- Abstractness (A)

- Normalized Distance from Main Sequence (D)

EFFERENT COUPLING (CE)

This metric is used to measure interrelationships between classes. As defined, it is a number of classes in a given package, which depends on the classes in other packages. It enables us to measure the vulnerability of the package to changes in packages on which it depends.

Pic. 1 – Outgoing dependencies

In the pic.1 it can be seen that class A has outgoing dependencies to 3 other classes, that is why metric Ce for this class is 3.

The high value of the metric Ce> 20 indicates instability of a package, change in any of the numerous external classes can cause the need for changes to the package. Preferred values for the metric Ce are in the range of 0 to 20, higher values cause problems with care and development of code.

AFFERENT COUPLING (CA)

This metric is an addition to metric Ce and is used to measure another type of dependencies between packages, i.e. incoming dependencies. It enables us to measure the sensitivity of remaining packages to changes in the analysed package.

Pic. 2 – Incoming dependencies

In the pic.2 it can be seen that class A has only 1 incoming dependency (from class X), that is why the value for metrics Ca equals 1.

High values of metric Ca usually suggest high component stability. This is due to the fact that the class depends on many other classes. Therefore, it can’t be modified significantly because, in this case, the probability of spreading such changes increases.

Preferred values for the metric Ca are in the range of 0 to 500.

INSTABILITY (I)

This metric is used to measure the relative susceptibility of class to changes. According to the definition instability is the ration of outgoing dependencies to all package dependencies and it accepts value from 0 to 1.

The metric is defined according to the formula:

Where: Ce – outgoing dependencies, Ca – incoming dependencies

Pic. 3 – Instability

In the pic.3 it can be seen that class A has 3 outgoing and 1 incoming dependencies, therefore according to the formula value of metric I will equal 0,75.

On the basis of value of metric I we can distinguish two types of components:

The ones having many outgoing dependencies and not many of incoming ones (value I is close to 1), which are rather unstable due to the possibility of easy changes to these packages;

The ones having many incoming dependencies and not many of outgoing ones (value I is close to 0), therefore they are rather more difficult in modifying due to their greater responsibility.

Preferred values for the metric I should fall within the ranges of 0 to 0.3 or 0.7 to 1. Packages should be very stable or unstable, therefore we should avoid packages of intermediate stability.

ABSTRACTNESS (A)

This metric is used to measure the degree of abstraction of the package and is somewhat similar to the instability. Regarding the definition, abstractness is the number of abstract classes in the package to the number of all classes.

The metric is defined according to the formula:

Where: Tabstract – number of abstract classes in a package, Tconcrete – number of concrete classes in a package

Preferred values for the metric A should take extreme values close to 0 or 1. Packages that are stable (metric I close to 0), which means they are dependent at a very low level on other packages, should also be abstract (metric A close to 1). In turn, the very unstable packages (metric I close to 1) should consist of concrete classes (A metric close to 0).

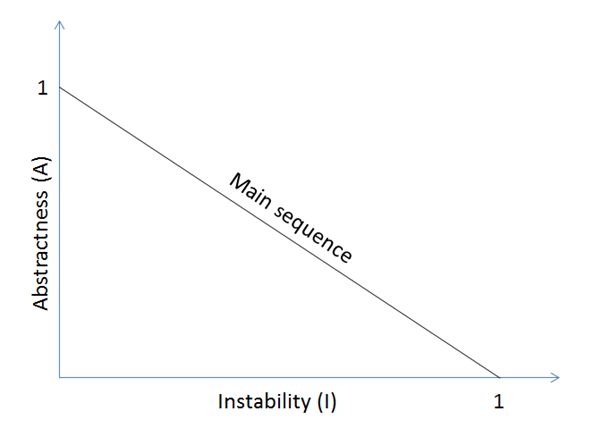

Additionally, it is worth mentioning that combining abstractness and stability enabled Martin to formulate thesis about the existence of main sequence (Pic. 4).

Pic. 4 – Main sequence

In the optimal case, the instability of the class is compensated by its abstractness, there is an equation I + A = 1. Classes that were well designed should group themselves around this graph end points along the main sequence.

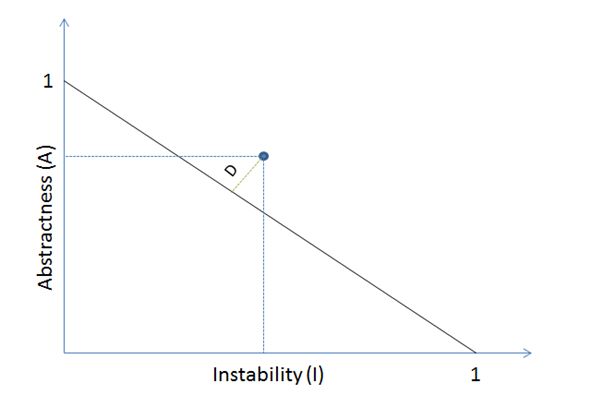

NORMALIZED DISTANCE FROM MAIN SEQUENCE (D)

This metric is used to measure the balance between stability and abstractness and is calculated using the following formula:

Where: A- abstractness, I – instability

The value of metric D may be interpreted in the following way; if we put a given class on a graph of the main sequence (Pic. 5) its distance from the main sequence will be proportional to the value of D.

Pic. 5 – Normalized distance from Main Sequence

The value of the metric D should be as low as possible so that the components were located close to the main sequence. In addition, the two extremely unfavourable cases are considered:

- A = 0 and I = 0, a package is extremely stable and concrete, the situation is undesirable because the package is very stiff and can’t be extended;

- A = 1 and I = 1, rather impossible situation because a completely abstract package must have some connection to the outside, so that the instance that implements the functionality defined in abstract classes contained in this package could be created.

Software Metrics by levels: Project, File, Function, Class, Method

Project Level

- Number of Interfaces (INTERFS)

- Number of Abstract Classes (CLSa)

- Number of Concrete Classes (CLSc)

- Number of Classes (CLS)

- Number of Root Classes (ROOTS)

- Number of Leaf Classes (LEAFS)

- Maximum Depth of Intheritance Tree (maxDIT)

File Level

- Lines of Code (LOC)

- Comment Lines of Code (CLOC)

- Non-Comment Lines of Code (NCLOC)

- Lines of Executable Code (ELOC)

- Lines of Executed Code

- Code Coverage

Function Level

- Lines of Code (LOC)

- Lines of Executable Code (ELOC)

- Lines of Executed Code

- Code Coverage

- Cyclomatic Complexity

- Change Risk Analysis and Predictions (CRAP) Index

Class Level

- Lines of Code (LOC)

- Lines of Executable Code (ELOC)

- Lines of Executed Code

- Code Coverage

- Attribute Inheritance Factor (AIF)

- Attribute Hiding Factor (AHF)

- Class Size (CSZ)

- Class Interface Size (CIS)

- Depth of Inheritance Tree (DIT)

- Method Inheritance Factor (MIF)

- Method Hiding Factor (MHF)

- Number of Children (NOC)

- Number of Interfaces Implemented (IMPL)

- Number of Variables (VARS)

- Number of Non-Private Variables (VARSnp)

- Number of Variables (VARSi)

- Polymorphism Factor (PF)

- Weighted Methods per Class (WMC)

- Weighted Non-Private Methods per Class (WMCnp)

- Weighted Inherited Methods per Class (WMCi)

Method Level

- Lines of Code (LOC)

- Lines of Executable Code (ELOC)

- Lines of Executed Code

- Code Coverage

- Cyclomatic Complexity

- Change Risk Analysis and Predictions (CRAP) Index

Cyclomatic complexity metric

Cyclomatic Complexity

Measures the structural complexity of the code. It is created by calculating the number of different code paths in the flow of the program. A program that has complex control flow will require more tests to achieve good code coverage and will be less maintainable.

Maintainability Index

Maintainability Index

Calculates an index value between 0 and 100 that represents the relative ease of maintaining the code. A high value means better maintainability. Color coded ratings can be used to quickly identify trouble spots in your code. A green rating is between 20 and 100 and indicates that the code has good maintainability. A yellow rating is between 10 and 19 and indicates that the code is moderately maintainable. A red rating is a rating between 0 and 9 and indicates low maintainability.

Heavyweight software audits and inspections, Fagan inspections

Software inspection

An inspection is one of the most common sorts of review practices found in software projects. The goal of the inspection is to identify defects. Commonly inspected work products include software requirements specifications and test plans. In an inspection, a work product is selected for review and a team is gathered for an inspection meeting to review the work product. A moderator is chosen to moderate the meeting. Each inspector prepares for the meeting by reading the work product and noting each defect. In an inspection, a defect is any part of the work product that will keep an inspector from approving it. For example, if the team is inspecting a software requirements specification, each defect will be text in the document which an inspector disagrees with.

Inspection process

The process should have entry criteria that determine if the inspection process is ready to begin. This prevents unfinished work products from entering the inspection process. The entry criteria might be a checklist including items such as "The document has been spell-checked".

The stages in the inspections process are: Planning, Overview meeting, Preparation, Inspection meeting, Rework and Follow-up. The Preparation, Inspection meeting and Rework stages might be iterated.

- Planning: The inspection is planned by the moderator.

- Overview meeting: The author describes the background of the work product.

- Preparation: Each inspector examines the work product to identify possible defects.

- Inspection meeting: During this meeting the reader reads through the work product, part by part and the inspectors point out the defects for every part.

- Rework: The author makes changes to the work product according to the action plans from the inspection meeting.

- Follow-up: The changes by the author are checked to make sure everything is correct.

The process is ended by the moderator when it satisfies some predefined exit criteria. The term inspection refers to one of the most important elements of the entire process that surrounds the execution and successful completion of a software engineering project.

Inspection roles

During an inspection the following roles are used.

- Author: The person who created the work product being inspected.

- Moderator: This is the leader of the inspection. The moderator plans the inspection and coordinates it.

- Reader: The person reading through the documents, one item at a time. The other inspectors then point out defects.

- Recorder/Scribe: The person that documents the defects that are found during the inspection.

- Inspector: The person that examines the work product to identify possible defects.

Related inspection types

Code review

A code review can be done as a special kind of inspection in which the team examines a sample of code and fixes any defects in it. In a code review, a defect is a block of code which does not properly implement its requirements, which does not function as the programmer intended, or which is not incorrect but could be improved (for example, it could be made more readable or its performance could be improved). In addition to helping teams find and fix bugs, code reviews are useful both for cross-training programmers on the code being reviewed and for helping junior developers learn new programming techniques.

Peer reviews

Peer reviews are considered an industry best-practice for detecting software defects early and learning about software artifacts. Peer Reviews are composed of software walkthroughs and software inspections and are integral to software product engineering activities. A collection of coordinated knowledge, skills, and behaviors facilitates the best possible practice of Peer Reviews. The elements of Peer Reviews include the structured review process, standard of excellence product checklists, defined roles of participants, and the forms and reports.

Software inspections are the most rigorous form of Peer Reviews and fully utilize these elements in detecting defects. Software walkthroughs draw selectively upon the elements in assisting the producer to obtain the deepest understanding of an artifact and reaching a consensus among participants. Measured results reveal that Peer Reviews produce an attractive return on investment obtained through accelerated learning and early defect detection. For best results, Peer Reviews are rolled out within an organization through a defined program of preparing a policy and procedure, training practitioners and managers, defining measurements and populating a database structure, and sustaining the roll out infrastructure.

Fagan inspection

A Fagan inspection is a process of trying to find defects in documents (such as source code or formal specifications) during various phases of the software development process. It is named after Michael Fagan, who is credited with being the inventor of formal software inspections.

Fagan inspection defines a process as a certain activity with pre-specified entry and exit criteria. In every process for which entry and exit criteria are specified, Fagan inspections can be used to validate if the output of the process complies with the exit criteria specified for the process. Fagan inspection uses a group review method to evaluate the output of a given process

Examples

Examples of activities for which Fagan inspection can be used are:

- Requirement specification

- Software/Information System architecture

- Programming (for example for iterations in XP or DSDM)

- Software testing (for example when creating test scripts)

Usage

The software development process is a typical application of Fagan inspection. As the costs to remedy a defect are up to 10 to 100 times less in the early operations compared to fixing a defect in the maintenance phase,[1] it is essential to find defects as close to the point of insertion as possible. This is done by inspecting the output of each operation and comparing that to the output requirements, or exit criteria, of that operation.

Criteria

Entry criteria are the criteria or requirements which must be met to enter a specific process. For example, for Fagan inspections the high- and low-level documents must comply with specific entry criteria before they can be used for a formal inspection process.

Exit criteria are the criteria or requirements which must be met to complete a specific process. For example, for Fagan inspections the low-level document must comply with specific exit criteria (as specified in the high-level document) before the development process can be taken to the next phase.

The exit criteria are specified in a high-level document, which is then used as the standard to which the operation result (low-level document) is compared during the inspection. Any failure of the low-level document to satisfy the high-level requirements specified in the high-level document are called defects (and can be further categorized as major or minor defects). Minor defects do not threaten the correct functioning of the software, but may be small errors such as spelling mistakes or unaesthetic positioning of controls in a graphical user interface.

Typical operations

A typical Fagan inspection consists of the following operations:

Planning

- Preparation of materials

- Arranging of participants

- Arranging of meeting place

Overview

- Group education of participants on the materials under review

- Assignment of roles

Preparation

- The participants review the item to be inspected and supporting material to prepare for the meeting noting any questions or possible defects

- The participants prepare their roles

Inspection meeting

- Actual finding of defect

Rework

- Rework is the step in software inspection in which the defects found during the inspection meeting are resolved by the author, designer or programmer. On the basis of the list of defects the low-level document is corrected until the requirements in the high-level document are met.

Follow-up

- In the follow-up phase of software inspections all defects found in the inspection meeting should be corrected (as they have been fixed in the rework phase). The moderator is responsible for verifying that this is indeed the case. They should verify that all defects are fixed and no new defects are inserted while trying to fix the initial defects. It is crucial that all defects be corrected, as the costs of fixing them in a later phase of the project can be 10 to 100 times higher compared to the current costs.[1]

Fagan inspection basic model

Follow-up

In the follow-up phase of a Fagan inspection, defects fixed in the rework phase should be verified. The moderator is usually responsible for verifying rework. Sometimes fixed work can be accepted without being verified, such as when the defect was trivial. In non-trivial cases, a full re-inspection is performed by the inspection team (not only the moderator).

If verification fails, go back to the rework process.

Roles

The inspection process is normally performed by members of the same team that is implementing the project. The participants fulfill different roles within the inspection process:

- Author/Designer/Coder: the person who wrote the low-level document

- Reader: paraphrases the low-level document

- Reviewers: reviews the low-level document from a testing standpoint

- Moderator: responsible for the inspection session, functions as a coach

Benefits and results

By using inspections the number of errors in the final product can significantly decrease, creating a higher quality product. In the future the team will even be able to avoid errors as the inspection sessions give them insight into the most frequently made errors in both design and coding providing avoidance of error at the root of their occurrence. By continuously improving the inspection process these insights can even further be used.

Together with the qualitative benefits mentioned above major "cost improvements" can be reached as the avoidance and earlier detection of errors will reduce the amount of resources needed for debugging in later phases of the project.

In practice very positive results have been reported by large corporations such as IBM,[citation needed] indicating that 80% to 90% of defects can be found and savings in resources up to 25% can be reached.